HPC servers pack more than 1,800 cores

May 14, 2010 — by LinuxDevices Staff — from the LinuxDevices Archive — viewsAppro announced a pair of 1U servers aimed at small- and medium-sized HPC (high performance computing) deployments. The Tetra 1326G4 and 1426G4 combine Intel or AMD processors with Nvidia Tesla M2050 GPU (graphics processing unit) cards, offering up to 24 CPU cores and 1,792 GPU cores plus as much as 3TB of storage, the company says.

LinuxDevices doesn't typically cover enterprise servers, but Appro's Tetra 1x26G4 devices are something else again. The company says the devices are aimed at "technology early adopters" in energy and government research segments of the HPC market, where computationally intensive applications are common.

Appro's Tetra 1x26G4 server

(Click to enlarge)

The two servers each include four Tesla M2050 GPU cards, touted by Nvidia as delivering cluster performance at 1/10th the cost and 1/20th the power consumption of quad-core, CPU-only systems. Together, the cards provide 1,792 "Cuda" cores and more than 2 Teraflops of double-precision performance, according to Nvidia and Appro. (For more details on the M2050 cards, see later in this story.)

According to Appro, its 1326G4 runs host Linux or Windows operating systems on two eight/twelve core AMD Opteron 6100 ("Magny Cours") processors per server, supported by the chipmaker's SR5670 northbridge and SP5100 southbridge. The device supports up to 128GB of DDR3 RAM via eight DIMM sockets, the company says.

The otherwise-similar 1426G4 uses Intel CPUs, in the form of two four/six core Xeon 5600 processors, supported by the 5520 chipset and ICH10R I/O controller, says Appro. This model supports up to 96MB of RAM in its eight DIMM slots, the company adds.

Both the 1326G4 and 1426G4 provide up to 3TB of storage capacity via six bays for hot-swappable, 2.5-inch SATA hard disk drives, Appro says. Each device is also said to have an available PCI Express x4 expansion slot. Meanwhile, the four M2050 GPU cards are accommodated via a riser card arrangement, the company explains.

Each server also includes two gigabit Ethernet ports, four USB 2.0 ports, a serial port, a VGA port, and an additional LAN port dedicated to IPMI (intelligent platform management interface) functionality. According to Appro, the servers include twelve heavy-duty counter-rotating fans apiece, and that's a good thing: Power consumption is said to range between 1200 and 1400 Watts.

Features and specifications provided by Appro for the 1326G4 and 1426G4 include:

- Processor:

- CPUs:

- 1326G4 — 2 x 8/12 core AMD Opteron 6100 (clock speed n/s)

- 1426G4 — 2 x 4/6 core Intel Xeon 5600 (clock speed n/s)

- GPUs — 4 x Nvidia Tesla M2050

- CPUs:

- Chipset:

- 1326G4 — SR5670 northbridge and SP5100 southbridge

- 1426G4 — Intel 5520 and ICH10R

- Memory:

- 1326G4 — Up to 128GB of RAM via 8 DIMM slots

- 1426G4 — Up to 86GB of RAM via 8 DIMM slots

- Storage — six hot-swappable 2.5-inch SATA devices, for up to 3TB

- Expansion — 1 x PCI Express x4 slot

- Networking — 2 x gigabit Ethernet plus 1 LAN port for IPMI

- Other I/O:

- 1 x VGA

- 1 x serial

- 4 x USB 2.0

- Power — 100~140VAC power supply requires 1200 Watts; 180~240VAC power supply requires 1400 Watts

- Operating range — 50 to 95 deg. F (10 to 35 deg. C)

- Dimensions — 34.5 x 17.2 x 1.7 inches (876 x 437 x 43mm)

- Weight — approx. 50 pounds (22.7kg)

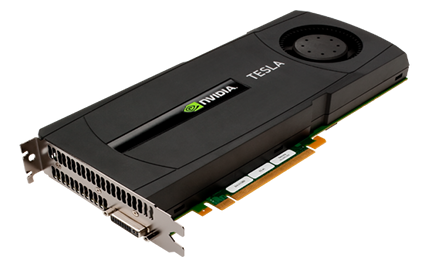

Nvidia's Teslas

The Nvidia Tesla M2050 boards used in Appro's servers are similar to the Tesla C2050 below, except that they do not include the built-in fan and cover pictured. Each board includes its own 3GB of error-correcting GDDR5 memory and provides 520GFlops of double-precision floating point performance, Nvidia says. (Meanwhile, the forthcoming C2070 will double GDDR5 memory to 6GB and provide 630GFlops, the chipmaker adds.)

Nvidia's Tesla C2050

(Click to enlarge)

Nvidia says the boards support the next-generation IEEE 754-2008 double-precision floating point standard, and are designed for applications such as ray tracing, 3D cloud computing, video encoding, database search, data analytics, computer-aided engineering, and virus scanning. The C2070 and C2050 support PCI Express 2.0, for "fast and high-bandwidth communication between CPU and GPU," the company adds.

Specifications provided for the Tesla C2070 and C2050 by Nvidia include:

- Memory — 3GB DDR5 on C2050; 6GB DDR5 on C2070

- Double-precision floating point performance (peak) — 520 GFlops to 630 GFlops

- Form factor — Dual-slot PCI Express x16; 9.75 x 4.376 inches

- Power consumption — 190 Watts (typical)

- Software development tools — Cuda C/C++/Fortran, OpenCL, DirectCompute Toolkits

- Supported operating systems - Linux, Windows XP, Windows Vista

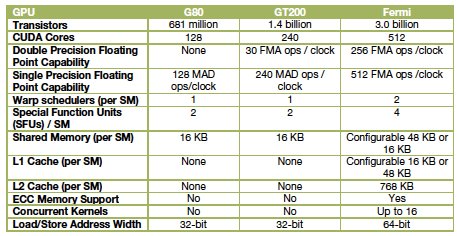

Background on Fermi

Fermi, announced last October at Nvidia's inaugural GPU Technology Conference in San Jose, amounts to a third generation of products embodying the company's "GPU computing" model. The first generation was the G80 unified graphics/computing architecture, introduced in November 2006 and later embodied in the GeForce 8800, Quadro FX 6500, and Tesla C870 GPU products. The G80 was the first GPU to replace separate vertex and pixel pipelines with a single unified processor, the first to utilize a scalar thread processor, and the first to support C, according to the company.

The second generation was the GT200, introduced in the GeForce GTX 280, Quadro FX 5800, and Tesla T10 GPUs. GT200 increased the number of streaming processor cores — subsequently referred to as "Cuda" cores — from 128 to 240. It also added "hardware memory access coalescing," improving memory access efficiency, along with double-precision floating point support, Nvidia says.

A comparison of Nvidia GPU generations

Source: Nvidia

Fermi, implemented in a GPU containing more than three billion transistors, more than doubles the number of Cuda cores, organizing them into 16 SMs (streaming multiprocessors) with 32 cores apiece. Sporting up to 6GB of GDDR5 RAM, Fermi is the first product of its type to support ECC (error correcting code), the company says.

In October, Nvidia cited the following additional features for Fermi:

- 512 Cuda cores feature the new IEEE 754-2008 floating-point standard, surpassing even the most advanced CPUs

- 8x the peak double-precision arithmetic performance of the GT200

- Nvidia Parallel DataCache, a cache hierarchy that speeds up algorithms such as physics solvers, raytracing, and sparse matrix multiplication where data addresses are not known beforehand

- Nvidia GigaThread engine, supporting concurrent kernel execution, where different kernels of the same application context can execute on the GPU at the same time (eg, PhysX fluid and rigid body solvers)

According to Nvidia, Fermi-based products are so powerful that they can now be termed CGPUs (computational graphics processing units), and are suitable for high-performance computing (HPC) applications such as linear algebra, numerical simulation, and quantum chemistry.

At Nvidia's GPU Technology Conference, Oak Ridge National Laboratory (ORNL) announced plans to build a new supercomputer that will employ the Fermi architecture, and also announced it will be creating the Hybrid Multicore Consortium, whose goals "are to work with the developers of major scientific codes to prepare those applications to run on the next generation of supercomputers built using GPUs."

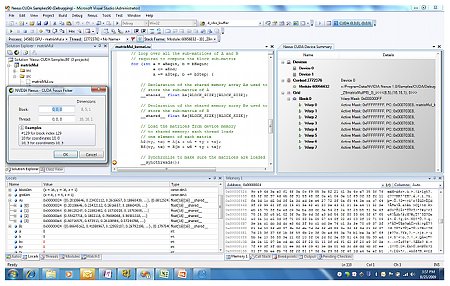

According to Nvidia, Fermi is the first product of its type that supports C++, complementing existing support for C, Fortran, Java, Python, OpenCL and DirectCompute. Fermi also supports Nexus (below), touted as "the world's first fully integrated heterogeneous computing application development environment within Microsoft Visual Studio."

Nvidia's Nexus

(Click to enlarge)

Nexus is composed of the following three components, according to Nvidia:

- The Nexus Debugger, a source code debugger for GPU source code, such as CUDA C, HLSL and DirectCompute. It supports source breakpoints, data breakpoints and direct GPU memory inspection. All debugging is performed directly on the hardware.

- The Nexus Analyzer, a system-wide performance tool for viewing GPU events (kernels, API calls, memory transfers) and CPU events (core allocation, threads and process events and waits) on a single, correlated timeline.

- The Nexus Graphics Inspector, which provides developers the ability to debug and profile frames rendered using APIs such as Direct3D. Developers can pause an application at any time and inspect the current frame, examining draw calls, textures, and vertex buffers, the company says.

Andy Keane, GM of the Tesla business unit at Nvidia, stated, "The launch of the Appro 1U Tetra GPU server with four Tesla 20-series GPUs illustrates Appro's innovation and leadership in high density computing systems. The Appro 1U Tetra GPU server is the first platform that provides two CPUs and four GPUs in a 1U server package, offering a terrific value proposition to HPC customers."

Availability

Appro did not cite pricing or availability for the Tetra 1326G4 and 1426G4, though the devices appear to be on sale now. Some indication of the price may be gleaned from the fact that Nvidia says its C2050 boards cost approximately $2,500 apiece.

More information on the Tetra 1326G4 and 1426G4 may be found on Appro's website, here and here. More information on Nvidia's Tesla M2050 may be found here.

More information on Nexus may be found on the Nvidia website, here. Meanwhile, overall background on Fermi, including a downloadable white paper, may be found here.

This article was originally published on LinuxDevices.com and has been donated to the open source community by QuinStreet Inc. Please visit LinuxToday.com for up-to-date news and articles about Linux and open source.